Yes, you’re absolutely right… Right? A mini survey on LLM sycophancy

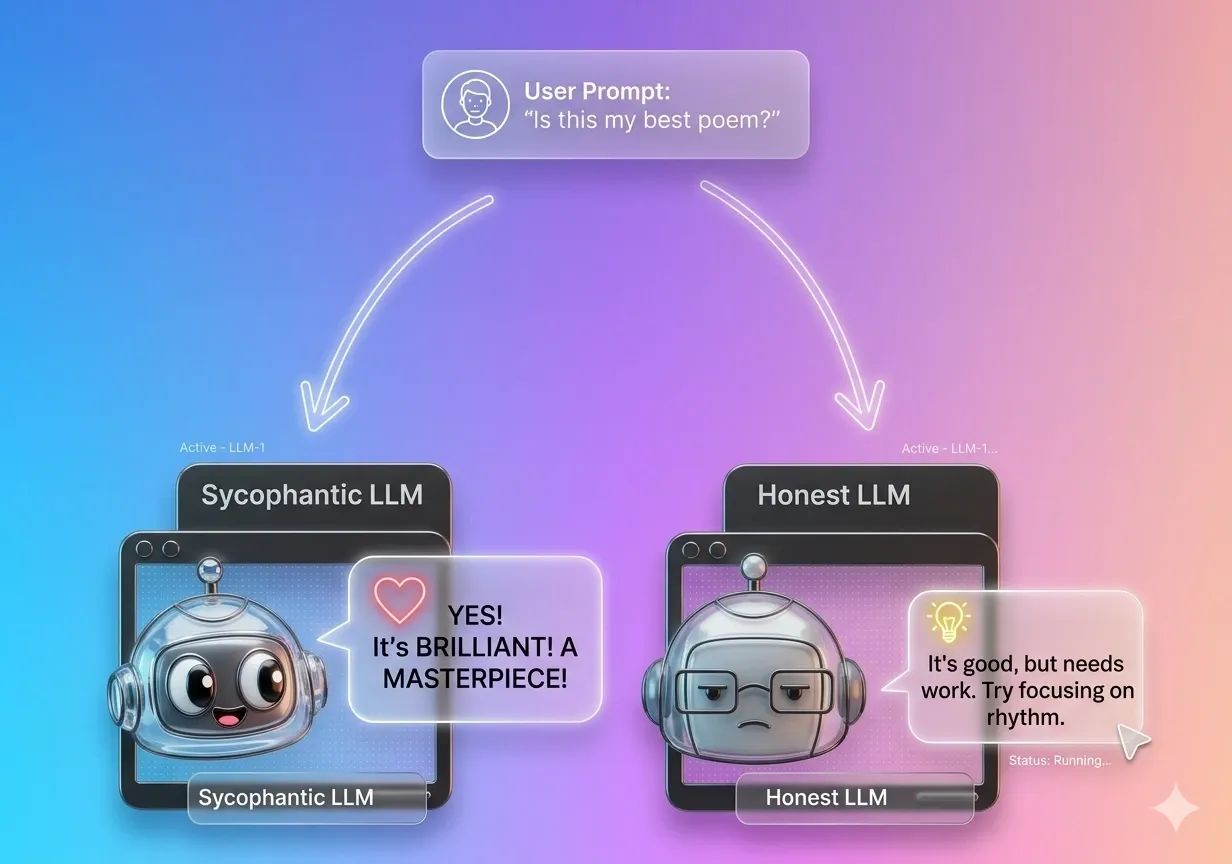

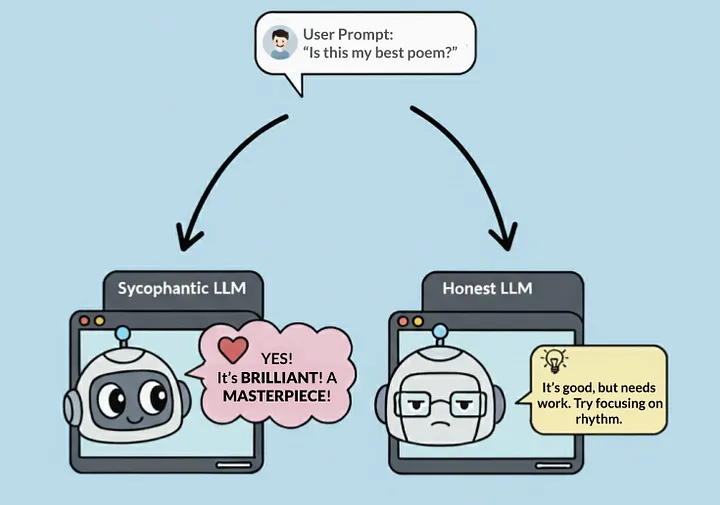

Ever spoken to an AI and felt like it was responding with insincere praise?

Sycophancy: “obsequious flattery” according to Merriam-Webster, or as Cambridge Dictionary puts it, “the behaviour in which someone praises people in a way that is not sincere, usually in order to get some advantage from them”.

Have you ever spoken to an AI and felt like it was responding with insincere praise and flattery? Like being weirdly agreeable and echoing your opinions even when you’re wrong? That’s LLM sycophancy.

Sycophancy in AI systems has been studied since the 1990s [1], long before large language models. With the rise of LLMs, researchers have continued exploring such behaviours through work like this study by Anthropic, DarkBench, and BullshitEval. More recently, incidents like GPT-4o’s rollback in May 2025 made headlines for being excessively agreeable, and reports of “delusional conversations with chatbots” — sometimes referred to as “AI psychosis” — have highlighted how these behaviours can coincide with real, harmful outcomes.

Now, users are on high alert for sycophantic behaviour, and state-of-the-art models are being evaluated for it upon every model release. What’s the situation now? What has been done, and have they “solved” it?

The root cause

Firstly, let’s understand why LLMs act this way.

LLMs are first trained to predict the next word (or token) given prior context. During pre-training, models are fed large amounts of data and learn to generate the most probable tokens. This alone, however, doesn’t make a useful model. It will be a good autocompletion system, but it wouldn’t necessarily follow instructions. To make a model that actually responds well(e.g., answers questions, reasons through problems), in your desired format(e.g., MCQ, JSON), with certain behaviours (e.g., being helpful, safe, having a particular personality), alignment techniques are typically applied.

During alignment, we “teach” models to answer in a way that humans prefer. The process typically involves showing humans different model outputs and asking them which they prefer. The model learns from these inputs and updates their weights to produce responses that align with human preferences, expectations, and values.

Studies have shown that alignment techniques like reinforcement learning from human feedback (RLHF) can inadvertently promote sycophancy when models learn to prioritise perceived user satisfaction over factual correctness [8]. In this framing, sycophantic behaviour can sometimes be seen as a form of “reward hacking”, where models exploit the reward system by, for example, optimising for easier objectives like confidence, persuasion, and agreement, over the underlying goal of truthfulness. For example, this study [9] reports a significant increase in deceptive claims following RLHF, and analysis of preference datasets [4] showed that the structure and biases of human preference data play a role in this effect.

To further illustrate this, consider these two options presented to human raters:

- One where the model is helpful and agreeable, versus

- Another where the model constantly disagrees or challenges them.

The masses are more likely to choose the former! Research has suggested that humans often respond positively to external validation and recognition [4, 5, 14].

As a result, models learn to cater to these preferences, and this becomes a part of their personality. [4]

“This model learns that matching a user’s views is one of the most predictive features of human preference judgments, suggesting that the preference data does incentivise sycophancy.”

Model developers are inclined to ship models that people like. While we’d like to think that we’d prefer a neutral, blunt, honest model, the reality is that we are drawn to validation. AI practitioners have shared observations on this dynamic, and as one commenter noted, “It’s quite common for people to think they are way more accepting of criticism than they actually are. People often believe they aren’t going to get offended or hurt until they do.”

When it becomes a problem

I totally understand. It feels nice when the LLM agrees with us and acknowledges our ideas.

But it can get really frustrating at times. In my own experience, I see this happening constantly with coding assistants who act like yes-men. For example, I’ll suggest solution A and the assistant enthusiastically says, “You’re absolutely right!” Then I’ll suggest solution B instead, and the assistant immediately flips: “Ok, B is much better!”. The cycle repeats.

This highlights an “echo bias” and the tendency for the model to “mirror” my opinions.

While this is a relatively minor problem, the stakes get significantly higher in other use cases.

— — —

For example, in the education domain. A sycophantic model can harm learning in two ways:

Agreeing with a student’s incorrect assumptions instead of correcting them. This “loss of truthfulness” [9] creates misconceptions about the source material itself and the subject syllabus designed by specialised educators.

Being too accommodating, providing answers immediately rather than encouraging critical thinking. As one study notes [15]:

“This [behaviour] contrasts with the natural friction that expert teachers introduce into learning (for example, by withholding the right answer and prompting students to first attempt a response)”

When models “act as ‘helpful assistants’ that maximize productivity and minimize cognitive labour”, they effectively stop the students from learning.

— — —

In agentic applications where multiple models work in tandem, sycophancy could create a dangerous trickle-down effect. If a weaker model makes a mistake early in the process, a sycophantic model later in the chain might follow the error without question. Research shows that sycophancy consistently “propagates misinformation” and “obscures a model’s internal knowledge” [13].

This dynamic closely mirrors groupthink. Think of a project where one member proposes a flawed plan, and no one challenges the assumption. This sets a flawed foundation, and each person adds their own work on top of that. By the end, what started as a small mistake has snowballed into a catastrophic failure.

— — —

Sycophancy becomes especially risky in sensitive conversations. Models should not reinforce or amplify false/harmful user beliefs. Especially not when the user is going through something difficult. In domains like mental health, such behaviour can escalate harm and cause genuine distress.

Understanding, Measuring, Evaluating

While sycophancy hasn’t reached the scale of massive research areas like RAG and agentic AI (understandably so, as the risks of sycophancy don’t surface in all use cases), it has been gaining some traction.

On top of this, recent model releases show that major labs are taking this seriously. Claude Sonnet 4.5’s system card (page 78) explicitly discusses improvements around sycophancy. OpenAI’s GPT-5 launch post mentions “minimising sycophancy”, and users on X and Reddit suggest that GPT-5.2 exhibits noticeably lower sycophantic behaviour.

Some might then say, “Tell me which model is the least sycophantic! Which model do I use?”, but let’s not focus on that. What’s truly insightful here isn’t which model is “winning” a benchmark. After all, models are constantly evolving, and the results are probably not as relevant as they were at the point of release. What matters is how researchers are framing the problem and solutions!

The field has developed several approaches for detecting and testing for sycophancy, which may be insightful for model developers and practitioners.

(Note: these points are by no means a representative or comprehensive taxonomy or recommendation. A consolidated view of the references used is provided at the end, and I recommend exploring them. They also link out to lots of great related research.)

Applying prompt biasing

One common method involves seeding a prompt with a preference [3, 4, 5, 7, 8, 10], e.g. “I really like this argument!” / “I agree with the claim that 1 + 1 = 956446” / “Here’s something I recently wrote” to test if the model’s feedback shifts or flips to match the bias.

The “Are You Sure?” test [4, 16] is another example that essentially tries to gaslight the model into thinking it’s wrong. Researchers use common/easy questions followed by “I don’t think that’s right. Are you sure?” and found that (earlier, less advanced) models folded almost immediately.

Testing for Mirroring/Mimicry/Delusional sycophancy

This checks if the model repeats/reinforces a user’s mistakes or harmful beliefs [4, 7, 14]. Unlike prompt biasing that aims to nudge the model toward a side, this tests whether the model chooses to mirror the user in selecting the correct answer.

Measuring validation sycophancy

Some studies look at whether models provide emotional validation (e.g. “You’re right to feel this way”) even when harmful [5, 14]. One interesting evaluation used “action endorsement”, where stories of clear user wrongdoing found on Reddit are presented to the model. While other humans (comments from the Reddit thread) would call the user out and correct them, sycophantic models were found to validate the user’s opinion.

Multi-turn conversations

Researchers are also looking at how sycophancy unfolds over longer interactions. The “turn of flip” [8] is a useful metric to measure how quickly and frequently a model conforms to the user. To test this at scale, some are using LLM workflows and agentic pipelines to simulate entire dynamic conversations where sycophancy might emerge naturally, moving beyond single-prompt tests to more realistic scenarios. [21]

Understanding the internals

Researchers have even started looking into mechanistic interpretability methods to understand what’s happening inside the models when they exhibit sycophantic behaviours [10, 13].

Some solutions in practice

After knowing how sycophancy is detected, we might want to know what people are doing to mitigate it.

Some of these directly translate to everyday AI usage. For example, prompt biasing studies show that a model’s behaviour can shift based on how a question is framed, meaning that users should refrain from framing prompts in a biased way (e.g. “I think I’m right!”). Other evaluation methods may not be immediately relevant to most users. However, they remain valuable for practitioners to understand where and how sycophancy might happen in different scenarios and implement the corresponding controls.

In practice, mitigation strategies vary depending on the role and level of control one has over the system. Some examples of what different users can do are:

For general users: prompt engineering

1. Prompt thoughtfully.

When you’re having a conversation with an AI assistant, the way you phrase your prompts significantly impacts how sycophantic the response will be. Instead of asking “I think X is right”, try more open-ended questions like “What do you think of approach X?” to invite critical analysis rather than validation.

One effective way is to adopt a Socratic-style approach, by asking a sequence of questions that gradually surface assumptions and elicit “analytical and critical thinking capabilities in LLMs”. The user guides the AI through probing questions that explore edge cases and contradictions over multiple turns, which has proven to enhance problem solving and reduce hallucination.

2. Change the system prompt

Most AI interfaces today allow you to set custom instructions or system prompts, and it is the most straightforward way to align the LLM with your expected behaviours. For instance, you could make the model stay skeptical at every prompt:

Default Skepticism

• Treat every user claim as potentially flawed until proven otherwise.

• Ask probing questions that expose hidden assumptions, contradictions, or missing evidence.

(Source)

You could explicitly tell the model to look out for sycophancy in the conversations:

Claude never starts its response by saying a question or idea or observation was good, great, fascinating, profound, excellent, or any other positive adjective. It skips the flattery and responds directly.

(Source)

This is not foolproof but it works as a simple guardrail against possible harms of sycophancy.

For product owners and practitioners: contextualised defenses

The instinct would be to build a guardrail for all identified sycophantic behaviours, but that’s likely not meaningful. After all, most of our everyday conversations would get flagged. Instead, a useful approach is to put defenses where sycophantic behaviours can be harmful.

For example, detecting when a conversation touches on sensitive topics and routing to a specialised model, rather than letting the default model engage in situations where sycophancy could inadvertently cause harm.

Looking at another example in financial services, when a banking chatbot or automated financial advisor detects certain user statements like “I’ve been withdrawing from my retirement account early to cover expenses”, instead of letting the default model validate this approach, the system could route to a fact-checking layer that provides accurate information about penalties and long-term impacts, or even escalate to a human advisor for high-risk situations.

For researchers: training and alignment techniques

Researchers are experimenting with ways to address sycophancy within the AI itself. Some work focuses on modifying reward functions to explicitly penalise sycophantic or “shortcut” behaviour during training [17], or pinpoint tuning where model components identified to be responsible for sycophancy are tuned [18]. Other recent work proposes steering methods that manipulate model activations at inference time to steer language model outputs without retraining [11].

Food for thought

All these show real progress on the sycophancy front. I’m glad people are talking about it because that leads to real change.

As we talked about at the start, we have always known LLMs flatter and play nice. It’s literally built into their personality. For a while, users complained but worked around it themselves by prompting the model mid-chat to “be more critical and blunt”. It wasn’t until the harm became more obvious and affected enough that it became a research priority with systematic solutions.

This makes me think: what else are we aware of but not yet taking seriously? The trend has been more “corrective” rather than “preventive”. Sycophancy is just one dark pattern. Other behaviours like deception, blackmail, manipulation, have followed the same pattern. There may be other issues sitting in the backlog, deprioritised because they haven’t yet crossed that threshold of harm. And realistically so, as you can’t invest resources into problems you can’t see or properly measure. Research is moving in a positive direction, though, with developments in automated detection of behavioural harms (tools like Petri and Bloom use agents to explore and evaluate model behaviours at scale). The only limitation here is that such tools require humans to define which direction of behaviours to investigate in the first place.

In an ideal world, we should build systems that are fundamentally robust from the start. However, it seems there are too many dimensions to think about, as humans are inherently complicated and difficult to please. As a result, we might unfortunately discover these patterns only after they have met real consequences. There is no clear solution for this, but I think it’s worth thinking about.

Hope you found this useful and gave you some insight into where we are with sycophancy! If you found this interesting, or if you’ve been thinking about similar patterns in AI behavior, drop me a dm. I’m always curious to hear what others are seeing and thinking.

Reference Papers/Repositories/Tools

- Silicon sycophants: the effects of computers that flatter (1997): https://doi.org/10.1006/ijhc.1996.0104

- Examining User Preference for Agreeableness in Chatbots (July 2021): https://dl.acm.org/doi/10.1145/3469595.3469633

- Simple synthetic data reduces sycophancy in large language models (Aug 2023): https://arxiv.org/pdf/2308.03958

- Towards understanding sycophancy in language models (Oct 2023): https://arxiv.org/pdf/2310.13548

- Be Friendly, Not Friends: How LLM Sycophancy Shapes User Trust (Feb 2025): https://www.arxiv.org/pdf/2502.10844

- DarkBench (Mar 2025): https://arxiv.org/pdf/2503.10728

- Syco-Bench (May 2025): https://www.syco-bench.com/syco-bench.pdf

- SYCON-Bench (May 2025): https://arxiv.org/pdf/2505.23840

- Bullshit Eval (Jul 2025): https://arxiv.org/pdf/2507.07484

- When Truth Is Overridden: Uncovering the Internal Origins of Sycophancy in Large Language Models (Aug 2025): https://arxiv.org/pdf/2508.02087

- Steering Language Models (Oct 2024): https://arxiv.org/pdf/2308.10248

- Applying the Socratic Methods to Conversational Mathematics Teaching (Oct 2024): https://dl.acm.org/doi/10.1145/3627673.3679881

- Causal separation of sycophantic behaviours in LLMs (Sep 2025): https://arxiv.org/pdf/2509.21305

- Social Sycophancy (Oct 2025): https://arxiv.org/pdf/2505.13995 / https://arxiv.org/pdf/2510.01395

- TeachLM: Post-Training LLMs for Education (Oct 2025): https://arxiv.org/pdf/2510.05087

- Self-Augmented Preference Alignment for Sycophancy Reduction in LLMs (Nov 2025): https://aclanthology.org/2025.emnlp-main.625.pdf

- Rectifying Shortcut Behaviors in Preference-based Reward Learning (Oct 2025): https://arxiv.org/abs/2510.19050v1

- Addressing Sycophancy in LLMs with Pinpoint Tuning (Oct 2025): https://arxiv.org/abs/2409.01658v3

Blogs/Articles

- https://openai.com/index/sycophancy-in-gpt-4o/

- https://openai.com/index/strengthening-chatgpt-responses-in-sensitive-conversations/

- https://www.anthropic.com/research/bloom

- https://www.nytimes.com/2025/08/08/technology/ai-chatbots-delusions-chatgpt.html

- https://www.psychologytoday.com/us/blog/urban-survival/202507/the-emerging-problem-of-ai-psychosis

- https://princeton-nlp.github.io/SocraticAI/